With Discord in talks with Palantir, it's time to move on from having my chats controlled by a platform that's only going to continue to go down hill. We've been through many a service through the years, from things like AIM, MSN Messanger, Gtalk, and mumble, to Skype, eventually Discord, and probably other platforms that I've forgotten about. I'm well versed in self hosting at this point, and am done with changing platforms. It's time for self hosting a chat service.

Life update

So I've been pretty quiet here

Life's been pretty busy over the last year or two! It's nearly been two years since I've had anything at all interesting to say here, but I'm finally putting out an update.

I've mostly spent the last two years away from tech, just letting it serve me for once as best as I could instead of serving it. My services have mostly been stable, and minimal changes have taken place. Running a few more private services to make my life easier, and swapped out the decrepit airsonic-advanced server out for a more up to date Navidrome.

My living situation has changed quite a bit as well, which is why I'm no longer nearly as obsessed with tech as I had been. I'm living with my partner now, along with a cat! It's been quite the change of pace, and I've gotten much more into home automation with Home Assistant, so I'm sure I'll have something interesting to post about that at some point.

In terms of audio gear, there's not been much change in my desk setup, though my partner is into vinyl records, so we've been exploring that front. I'm sure I'll have something nerdy to say about that later as well, so possibly look forward to that, probably expensive, deep dive.

For those of you with a keen eye, I have changed my name (not legally yet unfortunately), so that's been a bit of a change in life. It's over all life as usual, just a lot more happy and comfortable than I've ever been before. Hopefully I'll have some interesting things to come in 2026!

Why you don't want to what I do

How I got here

So I see a lot of confusion from people that seem to think that they should also get a system like mine, or otherwise replicate my software setup on their machines. I figured I should probably explain more of why I have this setup, and probably why you don't want what I have as not understanding how things work are likely to lead you into many pain points that have lead me directly here. I should probably start with the list of things that I don't need, but people seem to think is a massive gain.

CPU

I do not need 32 cores for my system. I rarely use more than 2% of this CPU even while running several VM's and sometimes do compiles on it. Even doing 10 transcodes on the CPU at once still doesn't fully use it, and if transcodes are something you need, I'd likely recommend a GPU to encode it. This includes integrated GPU's on consumer CPU's.

RAM

RAM is something that will depend massively on your workload. I personally use ZFS, and many TB of storage on mechanical drives. Feeding RAM to that can help performance substantially, and this is where most of my RAM needs to be. I run a pretty large, by most people in the affordable homelab space, software stack, and other than ZFS ARC, most of my actual services will max out about 8GB of RAM needed, 16GB if I want to push it to "just testing" levels. You probably don't need 128GB of RAM.

PCIE

This was one of the biggest reasons that I went with the Epyc platform. This platform supports bifurcation allowing me to put more SSD's into PCIE slots, along with having many lanes to even enable me to run more devices. Many consumer platforms may appear to have 2/3 PCIE 16x, and 2/3 PCIE 1x, but don't actually have enough lanes to drive that. If you try to use 2 16x devices, they will usually drop to 8x, and some boards are only 8x on the second slot always. If you don't plan on much expansion, this is likely not something you care about. I needed the ability to run a few SAS cards for storage, and currently run PCIE networking at 10 gig speeds, and want the ability to do 40/100 gig at some point in the future. Between having proper passthrough support, as well as a massive amount of lanes to actually get devices running at full speed, I can do what I wouldn't ever attempt on most consumer platforms

VM's

VM's are generally something useful in the homelab, but putting your NAS/SAN into a VM has serious implications that you should understand before trying that. Most consumer platforms have pretty bad support for things like PCIE passthrough, be it just having general bugs, or not separating IOMMU groups, leading you to being unable to pass through in general. If you choose to run ZFS, you should give it direct access to the drive controller to help it detect and correct errors. If you have a few TB of throw away data, do as you please, but if you plan to have significant data stored, and/or you care about that data at all, I can't recommend using USB devices, or otherwise giving ZFS indirect access to the storage as you will eventually find issues, and it may be too late. If rebuilding all of your storage is not an issue, do as you see fit, but don't say you weren't warned.

What you probably should do

If you aren't planning on getting a board that has all of the server features, compressing everything into one machine is likely going to cause you more pain than it will help. I'd also not recommend running storage in a VM unless you understand the implications, and plan for it. These days, I highly recommend TrueNAS Scale if you don't personally want to manage the system all at a command line, and even if you do, some of the reporting and automation features are just nice to have, even as someone that managed my storage on headless Alpine for many years. It can also host some basic VM's and "Apps" to replace most people's docker needs. If you don't know exactly why you don't want it at a technical level, it's probably a good option for you.

Further questions?

If that wasn't a complete enough explication of why my system is likely overkill, and why I don't recommend what works for me, feel free to reach out to me on discord as kdb424, or via email at blog@kdb424.xyz as I love questions and am happy to help!

An Epyc Change

I got tired of managing systems

After messing with GlusterFS for a while, learning it's ups and downs, I've determined that I'm moving back to a single physical node, for the most part. I had a few goals in mind when looking at hardware.

- Enough CPU/RAM to replace everything I currently run

- PCIE expansion. Lots of lanes for everything I ram in there.

- IPMI! I don't want to have to go plugging in display/keyboard constantly.

This pretty much left me with 2 major system types to look at. Multi socket Intel Xeon's, or AMD Epyc systems. With old Xeon systems being even more work due to the absolute requirement of understanding UMA and NUMA and more importantly going through the steps of setting it up, higher power draw, and with that noise, I quickly ruled them out. I set my eyes on the Epyc 7551p and the Supermicro H11SSL-NC motherboard to cover every single thing I wanted out of the system. At the cost of about $700 USD to fully replace the core of my old system, I felt like this was a reasonable price for what all I get.

Specs

- AMD Epyc 7551p 32 core (64 thread)

- Supermicro H11SSL-NC

- 128GB Samsung 2133 Registered ECC

- 2x256GB Inland Professional SSD

- 3x10TB Western Digital drives (white label pro red)

- 2x1TB Crucial MX500

- 2x2TB Intel P3600 PCIE SSD

- Fractal Define R5

- HP NC550SFP

- LSI SAS HBA

Build issues and complications

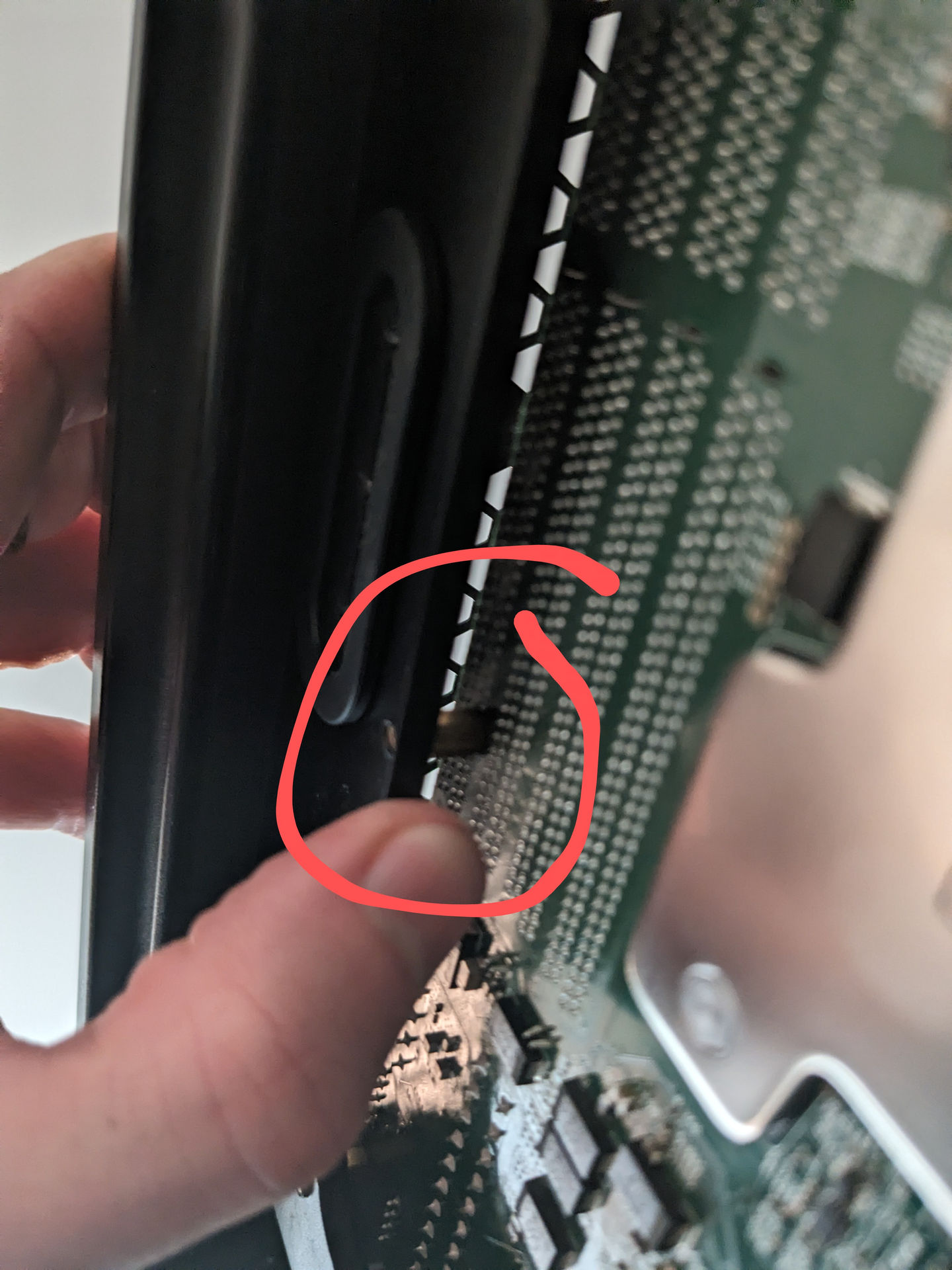

Over all, this was a fairly simple build in terms of hardware. The Motherboard is mostly ATX standard with one exception (photo later). One oversight I had was not having VGA anything to hook up to the system when it showed up. The onboard video is forced to be the only enabled display by default, and I should have seen that coming. IPMI is also disabled by default, so wasn't able to even remote into it until I got a display out.

Turns out it's mostly ATX. Whoops, need to remove a standoff.

The plan!

The current goal is to get it running on Proxmox to allow for easy separation of duties, and isolation of public/private hosted services. I'm planning on running something to manage ZFS and act as a SAN for the network, probably TrueNAS Scale as I have recommended that many times, and want to see what it's like today, despite knowing well how to manage a fully headless system. I'm pulling out a second gen Ryzen 8 core system of the network, so I'll also be creating a virtual machine to directly replicate it's place to make transitioning easy. After that, it's up to whatever I want. I'll be hosting a semi public Nix Hydra instance for friends since I have too much CPU grunt going to waste. I'll also be able to turn back on transcoding on my Jellyfin server as I know this should easily be able to transcode 4 or more streams while leaving plenty left for the rest of the services. After that, who knows.

What it looks like thus far.

Glusterfs

What's a Glusterfs?

Glusterfs is a network filesystem with many features, but the important ones here are it's ability to live on top of another filesystem, and offer high availability. If you have used SSHFS, it's quite similar in concept, giving you a "fake" filesystem from a remote machine, and as a user, you can use it just like normal without caring about the details of where the files are actually stored, except "over there I guess". Glusterfs unlike SSHFS, can be stored across multiple machines similar to network RAID. If one machine goes down, the data is still all there and well.

Why even bother?

A few years ago I decided that I was tired of managing docker services per machine and wanted them in a swarm. No more thinking! If a machine goes down, the service is either still up (already replicated across servers like this blog), or will come up on another server once it sees the service isn't alive. This is well and good until you need the SAN to go down. Now all of the data is missing, and the servers don't know, and you basically have to kick the entire cluster over to get it back alive. Not exactly ideal to say the least.

Side rant. Feel free to skip if you only care about the tech bits.

While ZFS has kept my data very secure over the ages, it can't always prevent machine oddity. I have had strange issues such as Ryzen bugs that could lock up machines at idle, a still not figured out random hang on networking (despite changing 80% of the machine, including all disks, operating system, and network cards) before it comes back 10 seconds later, and so on. As much as I always want to have a reliable machine, updates will require service restarts, reboots need done, and honestly, I'm tired of having to babysit computers. Docker swarm and NixOS are in my life because I don't want to babysit, but solve problems once, and be done with it. Storage stability was the next nail to hit, despite it being arguably a small problem, it still reminded me that computers exist when I wasn't in the mood for them to exist.

Why Glusterfs as opposed to Ceph or anything else?

Glusterfs sits on top of a filesystem. This is the feature that took me to it over anything else. I have trusted my data to ZFS for many years, and have done countless things that should have cost me data, including "oops, I deleted 2TB of data on the wrong machine", and having to force power off machines (usually SystemD reasons), and all of my data is safe. The very few things it couldn't save me from, it will happily tell me where there's corruption and I can replace the limited data from a backup. With all of that said, Glusterfs happily lives on top of ZFS, even letting me use datasets just as I have been for ages. It does however let me expand over several machines by using Glusterfs. There's a ton of modes to Glusterfs much as any "RAID software", but I'm sticking to effectively a mirror (RAID 1) in essence. Let's look at the hardware setup to explain this a bit better.

The hardware

planex

- Ryzen 5700

- 32GB RAM

- 2x16TB Seagate Exos

- 2x1TB Crucial MX500

pool

--------------------------

exos

mirror-0

wwn-0x5000c500db2f91e8

wwn-0x5000c500db2f6413

special

mirror-1

wwn-0x500a0751e5b141ca

wwn-0x500a0751e5aff797

-------------------------- morbo

- Ryzen 2700

- 32GB RAM

- 5x3TB Western Digital Red

- 1x10TB Western Digital (replaced a red when it died)

- 2x500GB Crucial MX500

red

raidz2-0

ata-WDC_WD30EFRX-68EUZN0_WD-WCC4N3EVYXPT

ata-WDC_WD100EMAZ-00WJTA0_1EG9UBBN

ata-WDC_WD30EFRX-68EUZN0_WD-WCC4N6ARC4SV

ata-WDC_WD30EFRX-68EUZN0_WD-WCC4N6ARCZ43

ata-WDC_WD30EFRX-68N32N0_WD-WCC7K2KU0FUR

ata-WDC_WD30EFRX-68N32N0_WD-WCC7K7FD8T6K

special

mirror-2

ata-CT500MX500SSD1_1904E1E57733-part2

ata-CT500MX500SSD1_2005E286AD8B-part2

logs

mirror-1

ata-CT500MX500SSD1_1904E1E57733-part1

ata-CT500MX500SSD1_2005E286AD8B-part1

-------------------------------------------- kif

- Intel i3 4170

- 8GB RAM

- 2x256GB Inland SSD

pool

-------------------------------

inland

mirror-0

ata-SATA_SSD_22082224000061

ata-SATA_SSD_22082224000174

-------------------------------Notes

These machines are a bit different in terms of storage layout. Morbo/Planex both actually store decent amounts of data, and kif is there just to help validate things, so it doesn't get a lot of anything. We'll see why later. Would having Morbo/Planex both have identical disk layouts increase performance? Yes, but so would SSD's, for all of the data. Tradeoffs.

ZFS setup

I decided to make my setup simpler on all of my systems, and just keep the mount

points for glusterfs the same. On each system, I created a dataset named

gluster and set it's mountpoint to /mnt/gluster. This makes it a ton easier

to not remember which machine has data where, and keep things streamlined. It

may look something like this.

zfs create pool/gluster

zfs set mountpoint=/mnt/glusterIf you have one disk, or just want everything on gluster, you could just mount the entire drive/pool to somewhere you'll remember, but I find it most simple to use datasets, and I have to migrate data from outside of gluster on the same array to inside of gluster. That's it for ZFS specific things.

Creating a gluster storage pool

gluster volume create media replica 2 arbiter 1 planex:/mnt/gluster/media morbo:/mnt/gluster/media kif:/mnt/gluster/media forceThis may look like a blob of text that means nothing, so let's look at what it does.

# Tells gluster that we want to make a volume named "media"

gluster volume create media

# Replicat 2 arbiter 1 tells gluster to use the first 2 servers to store the

# full data in a mirror (replicate) and set the last as an arbiter. This acts

# as a tie breaker for the case that anything ever disagrees, and you

# need a source of truth. It costs VERY little data to store this.

replica 2 arbiter 1

# The server name, and the path that we are using to store data on them

planex:/mnt/gluster/media

morbo:/mnt/gluster/media

kif:/mnt/gluster/media

# Normally you want gluster to create it's own directory. When we use datasets,

# the folder will already exist. This is something you should understand can

# cause issues if you point it at the wrong place, so check first

forceIf all goes well, you can start the volume with

gluster volume start mediaYou'll want to check the status once it's started, and it should look something like this.

Status of volume: media

Gluster process TCP Port RDMA Port Online Pid

------------------------------------------------------------------------------

Brick planex:/mnt/gluster/media 57715 0 Y 1009102

Brick morbo:/mnt/gluster/media 57485 0 Y 1530585

Brick kif:/mnt/gluster/media 54466 0 Y 1015000

Self-heal Daemon on localhost N/A N/A Y 1009134

Self-heal Daemon on kif N/A N/A Y 1015144

Self-heal Daemon on morbo N/A N/A Y 1854760

Task Status of Volume media

------------------------------------------------------------------------------With that taken care of, you can now mount your Gluster volume on any machine that you need! Just follow the normal instructions for your platform to install Gluster as it will be different for all of them. On NixOS at the time of writing, I'm using this to manage my Glusterfs for my docker swarm for any machine hosting storage. https://git.kdb424.xyz/kdb424/nixFlake/src/commit/5a1c902d0233af2302f28ba30de4fec23ddaaac9/common/networking/gluster.nix

Using gluster volumes

Once a volume is started, you can mount it pointing at any machine that has data in the volume. In my case I can mount from planex/morbo/kif, and even if one goes down, the data is still served. You can treat this mount identically to if you were storing files locally, or over NFS/SSHFS, and any data stored on it will be replicated, and left high availability if a server needs to go down for maintenance or if it has issues. This provides a bit of a backup (in the same way that a RAID mirror does, never rely on online machines for a full backup), so this could not only let you have higher uptime on data, but if you have data replication on a schedule for a backup to a machine that's always on, this would do that in real time, which is a nice side effect.

Now what?

With my docker swarm being able to be served without interruption from odd quirks, and it replacing my need to ZFS send/recv backups (on live machines, please have a cold store backup in a fire box if you care about your data, along with an off site backup), this lets me continue to forget that computers exist so I can focus on things I want to work on, like eventually setting up email alerts for ZFS scrubs, or S.M.A.R.T. scans with any drive warnings, I can continue to mostly forget about the details, and stay focused on the problems that are fun to solve. Yes, I could host my data elsewhere, but even ignoring the insane cost that I won't pay, I get to actually own my data, and not have a company creeping on things. Just because I have nothing to hide doesn't mean I leave my door unlocked.

Obligatory "things I say I won't do, but probably will later"

- Dual network paths. Network switch or cable can knock machines offline.

- Dual routers! Router upgrades always take too long. 5 minutes offline isn't acceptable these days!

- Discover the true power of TempleOS.